Writing Working Group (Writing WG)

Come join the Writing Working Group on Tuesdays @1:30-4:30pm in the Collaboratorium (IRIC 352) for a consistent and accountable time and place to write!

Come join the Writing Working Group on Tuesdays @1:30-4:30pm in the Collaboratorium (IRIC 352) for a consistent and accountable time and place to write!

Our last Brown Bag Lunch series of the year! Come join the IMCI crew for our yearly Brainstorming Event and Luncheon happening Monday, December 8, from 12:30-2pm in the IRIC Atrium. ***Due to limited supplies, the first 55 people to RSVP will be counted and invited. This event will be a social gathering centered around…

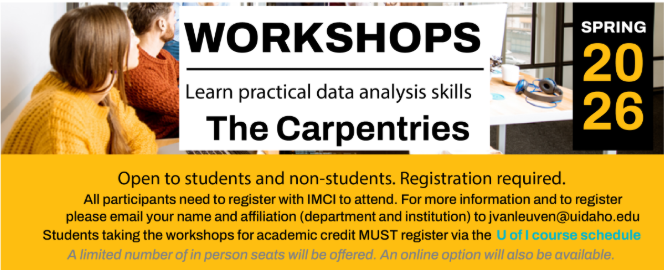

Sign up now! The Carpentries is an international community of learners and instructors dedicated to the importance of software and data in research. Learn more at www.carpentries.org Open to students and non-students. Registration required. All participants need to register with IMCI to attend. For more information and to register please email your name and affiliation…

Are you a biomedical researcher looking to generate preliminary data? IMCI is proud to announce a new deadline for our distinguished Pilot Project Program. We invite letters of intent for the 2026 Pilot Project Program funded by NIH P30GM159567. The objective of this program is to provide funding to stimulate model-based, biomedical research at the…

In this work-in-progress, Dr. Fehrenkamp will present an IMCI-funded pilot in which she is recruiting ~30 breastfeeding mother–infant dyads for an intensive 24-hour protocol to quantify cortisol and melatonin dynamics across the maternal–milk–infant triad. Each dyad completes up to 10 repeated collections of maternal saliva, breast milk, and infant saliva over two days, along with…

Greetings IMCI crew, The University of Idaho will adjourn for the week of 11/24 for Fall Break. IMCI will continue to support our collaborators as usual—though all IMCI events will be postponed until the semester resumes.

Who are the Apollonians? Don’t ask us, but find out this coming Monday with our session leader, Katharine Kolpan, as she leads us through a discussion titled: Who Are These Apollonians Anyway? Isotopes, Migration, and Health at Ancient Apollonia Pontica (7th-3rd centuries BC), Bulgarian Black Sea Coast The ancient port city of Apollonia Pontica was an…

Achieving trustworthy AI in healthcare remains a significant challenge, as existing eXplanation Artificial Intelligence (XAI) methods like attention maps or LIME/SHAP are often dismissed by clinicians and patients as uninterpretable. While the reasoning LLMs initially seemed promising for generating natural-language explanations, they often create unfaithful rationalizations that obscure a model’s true logic. To solve this,…

This talk explores Mycoplasma ovipneumoniae, a bacterium that rarely causes respiratory disease in domestic sheep and goats but poses a deadly threat to their wild counterparts. When domestic herds come in contact with bighorn sheep, the pathogen can spread, leading to severe pneumonia outbreaks impacting wild populations.

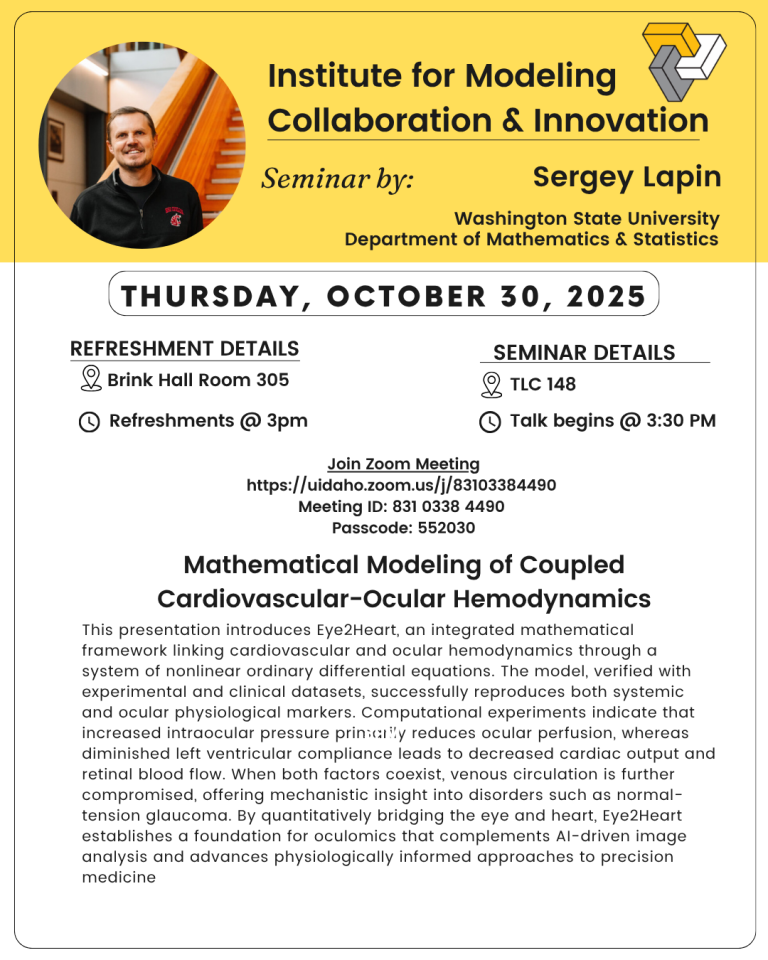

Come join us today at 3:00pm in Brink Hall 305 and TLC 148 at 3:30pm for a talk on Mathematical Modeling of Coupled Cardiovascular-Ocular Hemodynamics hosted by Sergey Lapin of the University of Washington! Details can be found below: Refreshments in Brink Hall Room 305 at 3:00 p.m.Join Zoom Meetinghttps://uidaho.zoom.us/j/83103384490 Meeting ID: 831 0338 4490…